Refine your search by topic:

Refine your search by audience:

Partners

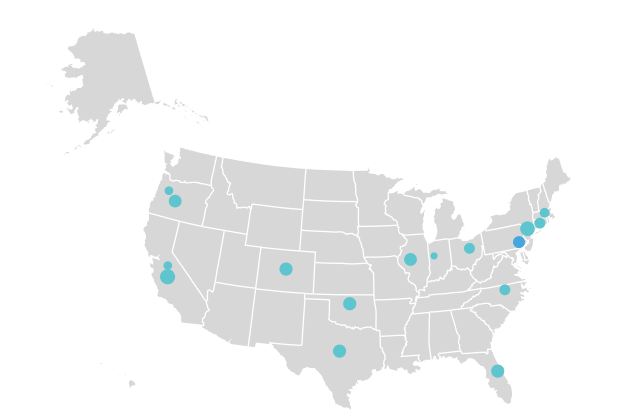

More than 400 organizations - including foundations, nonprofits, public agencies, and more - have partnered with Root Cause to improve people’s lives.

Learn More

Work

With Us

With Us